Source: Simon Dannhauer/iStockl via Getty images.

A study conducted by 451 Research from S&P Global Energy Horizons examines how information security teams have changed over the past 12 months; whether security leadership is positioned to succeed; how teams are affected by key technological changes, such as the integration of AI; skill sets needed today and tomorrow; and the relative complexity of recruiting and retaining security professionals.

The Take

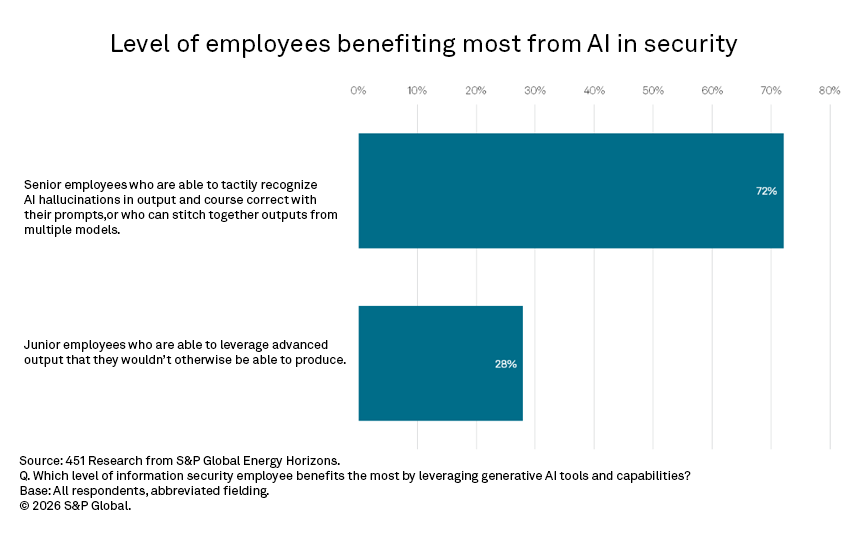

Three years ago, early generative AI integrations in security operations platforms primarily took the form of chat interfaces within their tooling ecosystem. These interfaces enabled natural language queries, incident summarization and the potential automation of routine investigative tasks. Vendors framed early use cases around the ability to uplevel junior or Tier 1 analysts in security operations centers (SOC). Several years into broader GenAI and agentic integrations, that upskilling narrative appears displaced. Security leaders now report that the primary beneficiaries of AI-assisted workflows are senior analysts rather than junior staff. About 72% of respondents to this study note that senior professionals, who recognize hallucinations in output and can course-correct in prompts, benefit most from leveraging AI integrations. Only 28% believe junior employees derive the primary benefit, generating output with AI they wouldn’t otherwise be able to produce. The implications of this are profound in security and beyond. AI may compress the labor hierarchy by automating tasks that were once performed by trained future experts.

Summary of findings

Human intervention in AI technology continues to be necessary for optimal results. The results from our Organizational Behavior 2025 survey are not entirely unexpected: If humans will remain “in the loop” to check the results of AI, it will be seasoned experts — humans who have built up tacit knowledge through thousands of repetitions of the work that AI now performs — who will most readily differentiate correct from incorrect results. Moreover, they can offer course correction and evaluate the results of multiple models to determine the best fit for any task. Research also suggests that giving AI models more sophisticated prompts improves the likelihood or receiving comprehensive and correct results.

AI is already affecting the entry–level hiring market, raising several serious questions. If the lower rungs of career ladders are knocked out by AI taking over tasks that were formative learning opportunities for new employees, what will replace this knowledge-creation activity? Who will be the senior employees to provide the necessary human-in-the-loop functions if people do not have paths to gain that experience? Even major AI developers have begun examining this issue. Research released by Anthropic found that programmers who rely heavily on AI assistance perform significantly worse when later asked to explain or reason about the code produced. Our study suggests that as automation increases, engineers must retain the ability to detect errors and guide model output. This is a skill that will erode, or may never be built up in the first place, if uncritical overreliance on AI output becomes the norm.

While skills related to leveraging GenAI (37%) and machine learning (35%) are ranked in middle of the pack in terms of importance for security teams today, they are the top two skills cited as inadequately addressed (machine learning, 36%; GenAI, 35%). As “AI for security” GenAI features integrate themselves into security tooling from SecOps to application security, they represent a readiness gap that security leaders are flagging in what is quickly becoming a transitional phase with AI integration. While acknowledging security has long attempted to leverage AI techniques to identify anomalous behavior, AI is moving up the stack, automating processes.

“Security for AI” controls have been steadily growing in usage. The use cases are still early stage, but when security leaders were asked what controls they have already implemented, the top three controls are sensitive information filtering (40%), coding guardrails (31%) and content filtering (30%). Secrets detection (27%) and preventing excessive usage such as with loop detection (25%) are also emerging as increasingly used controls. In many cases, threat modeling, and, downstream from that, determining what security controls are appropriate, are functions of how an organization is leveraging GenAI. Use cases range from the straightforward use of leveraging public GenAI applications (present in 20% of surveyed organizations) or using an application that has a GenAI component, such as a chat feature (26%), to more complex undertakings, including building internal applications that leverage a GenAI model, but with an organization’s data (20%). Each use case category requires its own set of security requirements; today, many of these requirements center on the safe use of popular frontier models. But an organization building an application, for example, may require comprehensive security testing, including red-teaming or application security-centric tests, in which the expertise to conduct the test effectively is still developing within the security industry.

The technology labor market has continued to affect organizations’ information security teams. About 23% of security professionals report plans to add to their teams, against 17% who plan to reduce team size. While that remains positive, it does represent a correction from 2022, when 37% of surveyed organizations were adding security staff. About 38% of respondents strongly agree that their current team size is adequate in the face of security risks to their organizations, while 46% somewhat agree. Respondents note recruiting information security professionals is slightly easier than in 2024, rating it an average of 5.6 on a 10-point scale, very similar to the 5.9 average in 2024, but significantly reduced from the 7.1 average signaling difficulty in 2022. Retention is at 5.3, down from 5.8 in 2024 and 6.0 in 2022; the changes there have not been as drastic as on the hiring side. The most significant change to security teams is the integration of managed security services, primarily for skill augmentation (42%). About 17% of teams note new leadership, such as at the level of chief information security officer (CISO). About 88% believe the organization’s CISO is positioned to succeed at their organization, at least as far as where they are positioned organizationally with respect to seniority level.

Email continues to pose the greatest threat as an entry point for downstream security incidents, cited by 30% of respondents, dwarfing the next-highest category, social media, at 11%. Its prominent role as a key point in human-computer interaction security, in which human trust rather than technical flaws is exploited, has led to a range of investments to combat social engineering, from email security to phishing emulation and training. The problem is expected to get worse, with 43% of respondents reporting they are very concerned about how GenAI is enabling more complex forms of business email compromise, and another 43% saying they are somewhat concerned. This is a near reversal in level of concern over two years, indicating AI is enabling attackers alongside defenders. “Hackers,” or bad actors, remain the top concerning threat group (19%), but they are closely followed by actors performing attacks on behalf of a nation-state (17%), signaling the continued role of international politics in information security.

Want insights on Infosec trends delivered to your inbox? Join the 451 Alliance.