Source: S&P Global Media Portal via Adobe Stock.

Modern IoT and AI programs are being reshaped by a shift toward distributed intelligence — the movement of data processing, model execution and decision‑making away from centralized systems and closer to where data originates. Findings from a study conducted by 451 Research from S&P Global Energy reflect this transition, showing how organizations are redesigning their architectures to handle faster, more heterogeneous operational demands. A majority now reports near-real‑time requirements for AI‑driven decisions, supported by survey data indicating that 39% of organizations need latency measured in sub-second increments. This need for speed is driving changes in where data is filtered, how models are deployed, and what types of AI are applied. The result is a landscape where efficiency, trust in data, and model placement matter as much as the analytic techniques themselves — and where architectural choices increasingly determine AI’s value in production.

The Take

Vendors selling into the IoT-AI ecosystem face a market that is restructuring its priorities around architectural pragmatism, data discipline and operational responsiveness. Buyers are no longer experimenting — they are refining. The emphasis on near-real‑time inference, growing dependence on telemetry‑driven model training, and widespread data filtering at the edge all point to customers wanting systems that work reliably under real‑world conditions rather than idealized architectures. With significant numbers of organizations requiring near-real‑time latency — especially those running critical operational AI processes rather than nice-to-have GenAI outputs — expectations for performance are tightening. Vendors should focus on solutions that reduce data noise, simplify model deployment across heterogeneous environments and support distributed decision‑making without adding operational burden. Clear value will come from reducing complexity, improving data quality and enabling AI models to operate where they have the greatest impact. The winners will be those who design for real constraints rather than for theoretical scale.

Summary of findings

AI adoption is becoming standard in IoT programs. Among organizations using or planning to use IoT, 43% already run AI in production and 33% are in proof-of-concept. Only 6% report no plans to use AI. This distribution shows that AI has largely moved from experimentation to operational use, and it is becoming an expected element of IoT project design rather than an optional enhancement.

AI inferencing today is a hybrid function, dependent on performance requirements and operational demands. Nearly half (48%) of organizations perform inferencing in enterprise data centers or private clouds, compared with 45% using AI-accelerated edge devices and 41% using industrial control systems. This distribution suggests a transitional phase in which centralized environments still anchor many deployments. It indicates that while edge capabilities are expanding, organizations continue to rely on controlled, established infrastructure for inference workloads.

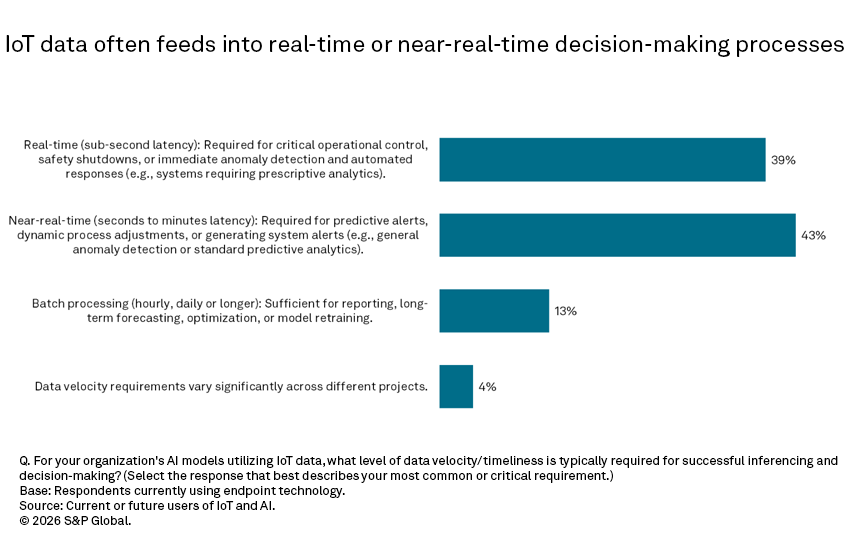

Many IoT-AI use cases require real-time responsiveness. About two in five organizations (43%) report needing near-real-time latency (seconds to minutes), and 39% require sub-second performance. Only 13% rely on batch processing. This indicates that a large share of IoT-AI applications depend on timely responses, which shapes decisions about where inferencing occurs and how data pipelines are constructed.

Telemetry data forms the backbone of AI training inputs. Nearly two-thirds (65%) of organizations use network and IT telemetry for AI model training, compared with 40% using production and quality data, and 39% using environmental data. The reliance on telemetry reflects the availability and consistency of machine-generated data streams. This indicates that many AI models are grounded in operational visibility rather than complex sensor or contextual data sources.

Generative AI is widely used alongside IoT data. Two-thirds (67%) of respondents using IoT and AI report using generative AI, surpassing traditional machine learning at 63% and deep learning at 41%. This suggests that organizations are incorporating GenAI into practical tasks such as summarization or content generation. The breadth of adoption indicates that GenAI is being integrated into workflows without requiring highly specialized modeling expertise.

Most organizations reduce a significant share of IoT data at the edge. Nearly half of respondents (46%) report moderate filtering of IoT data at the edge, and another 31% report high filtering. This means roughly three-quarters of organizations transmit less than half of their raw IoT data upstream. The filtering trend signals that cost control, bandwidth management and timely data processing are driving architectural decisions toward more distributed handling of data.

Data quality remains the most common challenge in AI-IoT projects. More than two in five respondents (43%) cite data quality or accuracy as a key barrier, the highest response rate of all surveyed challenges. Issues such as governance, cybersecurity and interoperability appear slightly less frequently. The persistent concern about data quality shows that inconsistent or noisy inputs continue to hinder the development and reliability of AI models built on IoT data.

Energy and sustainability considerations influence AI deployment choices. Almost half of respondents (46%) say energy efficiency is an extremely significant factor in AI architecture decisions, and 43% say it is moderately significant. This means nearly nine in 10 organizations factor energy use into model placement and hardware selection. The results show that sustainability is becoming an operational requirement rather than a peripheral concern in the design of AI-enabled IoT systems.

Want insights on IoT trends delivered to your inbox? Join the 451 Alliance.