Source: Johnny Greig/E+ via Getty images.

Edge computing is entering a phase in which architectural diversity and operational complexity are reshaping how organizations build, fund and secure distributed systems. Findings from a study conducted by 451 Research from S&P Global Energy indicate that organizations are no longer treating the edge as an experimental adjunct to cloud, but as a differentiated execution layer that requires its own sourcing logic and vendor mix.

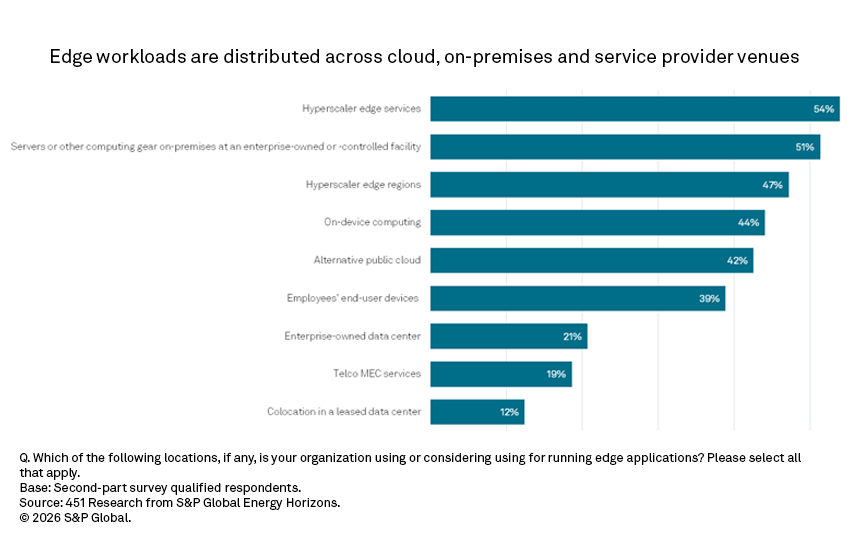

A defining signal is that 54% now use or are considering hyperscaler‑delivered edge services, underscoring a shift toward hybrid, cloud‑adjacent patterns that coexist with on‑premises control.

At the same time, organizations are widening their supplier landscape, bringing in specialized AI, operational technology and security vendors to harden and scale edge deployments. These dynamics point to a central theme: the edge is fragmenting technologically, while consolidating operationally, forcing organizations to balance openness, interoperability and life-cycle discipline as AI workloads place new demands on infrastructure strategy.

The Take

Vendors targeting the edge computing market face a landscape that is simultaneously expanding, fragmenting and hardening. The survey results signal that buyers are leaning into hybrid edge architectures anchored by hyperscaler services while still demanding flexibility across venues and vendors. This diversification creates opportunities — but also higher expectations for interoperability, life-cycle tooling and integration depth. As organizations widen their supplier rosters to include AI, operational technology (OT) and security specialists, vendors must assume they will be one contributor in a multiparty stack, not the controlling center of gravity. The smart play is to prioritize clean integration surfaces, predictable consumption models and robust automation that reduces operational friction at distributed sites. Buyers are also segmenting edge AI sourcing from general infrastructure decisions, meaning vendors cannot rely on incumbency to win emerging AI workloads. Clear alignment to AI deployment patterns, hardware acceleration needs and model life-cycle challenges will increasingly determine relevance.

Summary of findings

Hyperscaler-anchored, multivenue edge is standard: Organizations most commonly use or are considering hyperscaler edge services (54%), alongside on-premises organizational sites (51%) and hyperscaler edge regions (47%) — a clear pattern of blending cloud adjacency with local control. This mix reduces architectural lock-in and allows teams to place workloads where latency, data gravity or cost dictate, rather than committing to a single venue model.

Opex preference is real, but capex resilience matters. When funding edge infrastructure outside a centralized public cloud, 41% prefer opex, 35% split evenly and 24% still prefer capex. Notably, among long-tenured edge users (5+ years), opex preference rises to roughly 51%, while newer deployments skew more mixed, signaling that as estates mature, teams gravitate toward predictable consumption. This has implications for pricing, refresh and managed service uptake.

Buyers want a lead vendor, just not a closed stack. Nearly half (46%) rely on a single principal vendor for edge, and another 37% want that lead vendor to provide mix-and-match options. Where a principal exists, IT equipment/software vendors (53%) outrank public cloud providers (31%), indicating trust in infrastructure incumbents — yet with an expectation of ecosystem breadth rather than monolithic offerings.

DIY edge is niche, and “open consideration” skews to telco and cloud. Only 17% say they built their edge in DIY fashion with no primary vendor. Among those without a designated lead, consideration remains broad — multiple system operators/telcos (70%) and public cloud providers (67%) score highly — implying that even DIY-leaning teams plan to source capabilities from large-scale operators as projects harden from pilots to production.

Security and AI ops are the gating issues. Top barriers to edge execution are software vulnerabilities across distributed assets (41%) and the complexity of deploying/managing AI models at scale (39%), followed by data governance (34%) and GPU availability (28%). These pain points shift budgets toward software life-cycle hygiene, inference tooling and supply-assured accelerators — priorities that outweigh facility constraints for most.

AI is the accelerant — and it is rewriting sourcing lists. AI has significantly accelerated edge adoption for 52% of respondents, and 72% have reevaluated or added vendors due to AI. The most commonly added are OT integration specialists (52%) and AI/OT cybersecurity vendors (50%), underscoring that practical AI at the edge collides with industrial systems and risk domains where incumbents lack depth.

Edge AI buying often runs on a separate track. A combined 88% agree that their purchasing for edge AI (inference/training) is separate from general edge infrastructure. Primary reasons include separate budgets (42%) and the need for specialized silicon not offered by the principal infrastructure vendor (31%). For suppliers, this means winning the platform does not automatically win AI workloads; for buyers, it institutionalizes dual-track governance.

AI is reshaping strategy — not just speed. Beyond acceleration, 18% say they have changed their edge strategy completely because of AI. That level of pivot implies near-term re-platforming risks (e.g., hardware/SDK choices). However, there is also an upside: buyers are open to new categories (e.g., edge MLOps software at 49% and specialized model providers at 48%) that simplify life-cycle management and compress time to value.

Do you have your finger on the pulse of tech trends? Join the 451 Alliance for exclusive research content on industry-wide IT advancements. Do I qualify?